The images that you see on the computer screen are requested by the GPU for the CPU. These requests are carried out in two main steps:

- The configuration of the render state, which corresponds to the set of stages from geometry processing up to pixel processing.

- And then, the object being drawn on the screen.

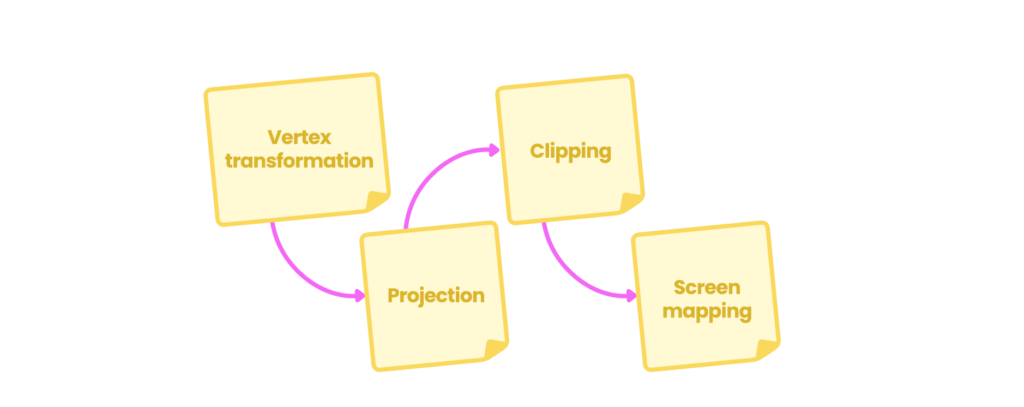

The geometry processing phase occurs in the GPU and is responsible for the vertex processing of your object, which is divided into four subprocesses which are:

- Vertex Shading.

- Projection.

- Clipping.

- Screen mapping.

Once the primitives have been assembled in the application stage, the Vertex Shading, better known as the Vertex Shader Stage, carries out two main tasks:

- It calculates the object vertices position.

- Then it transforms its position to different space coordinates so that they can be projected onto the computer screen.

Also, within this subprocess, you can select properties that you want to pass on to the following stages, for example, Normals, Tangents, UV coordinates, etc.

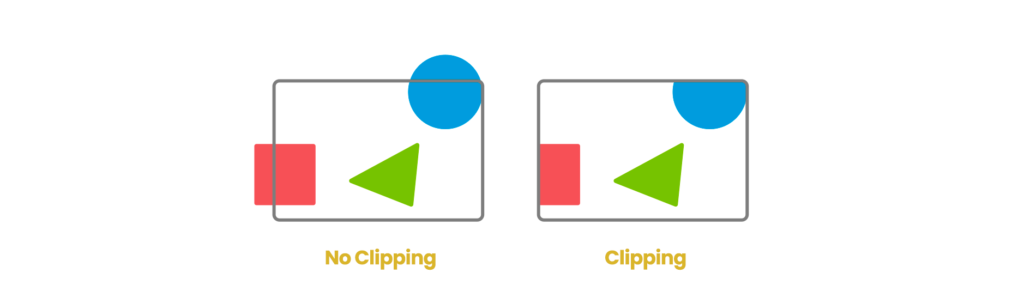

Projection and clipping occur in this process, which vary according to the camera properties in the scene: that is, if it is configured in perspective or orthographic (parallel). It is worth mentioning that the complete rendering process only occurs for those elements that are within the camera frustum, also known as the View-Space.

To understand this process, say there is a Sphere in the scene, where half of it is outside the frustum of the camera. Only the area of the Sphere that lies within the frustum will be projected and subsequently clipped on the screen (clipping), while the area of the Sphere that is out of sight will be discarded in the rendering process.

Once the clipped objects are in the memory, they are then sent to the screen map. In this stage, the three-dimensional objects in the scene are transformed into 2D screen coordinates, also known as Screen or Window coordinates.